A New Approach for Evaluating Joint System Performance

Posted by Chris Antonik on January 19, 2022

Developing effective approaches for evaluating joint system performance exemplifies the type of high stakes problems that Mile 2 actively seeks to solve.

Table of Contents

- A Cognitive Systems Engineering Take on Performance Evaluation

- The Value of a Fresh Approach

- Connect With Us

A Cognitive Systems Engineering Take on Performance Evaluation

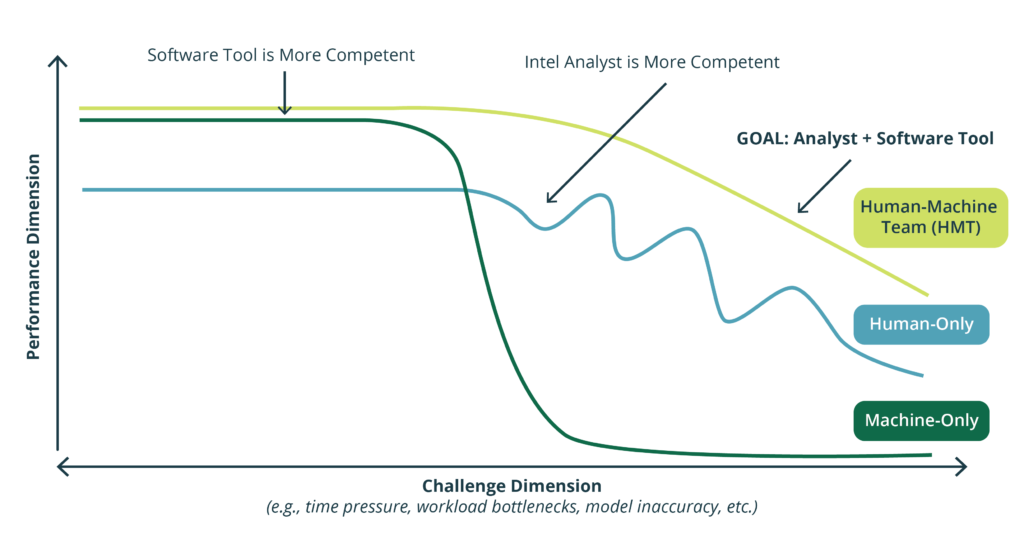

Networks of humans and “machines” collaborating to achieve common sets of goals in Intelligence Analysis work domains are typically referred to as a joint cognitive system, joint human-AI system, or joint system. Joint systems performance is very complex, which makes it difficult to measure how added technologies improve or degrade an analysis team’s ability to deliver timely, high-quality intelligence relative to their constraints. There is little evidence to suggest that any organization has developed an effective approach to evaluate the performance impacts of new software tools on macro-cognitive functions like collaborative sensemaking. Yet, many organizations still tout unfounded performance benefits of their solutions in maximizing intelligence analysis effectiveness. A common assumption is that adding new tools and data to a joint system improves performance, but in many cases the insertion of technology actually degrades performance. These kinds of performance declines are often not realized by stakeholders until they are surprised by a resulting catastrophic, high-cost event.

The Value of a Fresh Approach

Developing effective approaches for evaluating joint system performance exemplifies the type of high stakes problems that Mile 2 actively seeks to solve. Delivering such an approach will have significant implications for decision making relative to intelligence analysis and Department of Defense (DoD) technology acquisition. We believe it will help the DoD make more informed decisions on acquiring new intelligence analysis support capabilities, which in turn will improve the quality of insights provided by analysts across the intelligence community.

Mile 2 is partnering closely with the Cognitive Systems Engineering Laboratory (CSEL) at the Ohio State University and the Mission Analytics Branch at the Air Force Research Laboratory to tackle this problem. CSEL has demonstrated the value of using concepts like Joint Activity Graphs (JAGs) in healthcare to generate joint system performance profiles relative to varying types and degrees of challenges. Together we are operationalizing and expanding the JAG concept to inform novel assessment methods for Air Force and National Geospatial Intelligence Agency (NGA) intelligence analyst performance.

Our primary goal is leveraging novel concepts to evaluate, design, and build resilient joint systems for Air Force and NGA intelligence analysis. Joint systems that do not steeply decline in the face of increasing complexity or surprise (i.e., brittleness), but instead are able to gracefully degrade or extend when encountering situations outside of their competence envelope. Employing such an approach will ensure stakeholders in the DoD and intelligence community are armed with the best tools to provide the highest quality data-driven insights at all levels of decision making.

More information about Joint Activity Testing is available HERE.

Connect With Us

If you are working with or considering introducing AI into your program or business, please contact us to learn more about how you can measure the outcomes of the joint system.